![]()

One of the most consistently requested things from developers integrating our service into their software has been an OpenAPI specification and today we're happy to say that wait is over. We've published an OpenAPI v3.1.0 specification covering both our v3 API and our Dashboard APIs, and it's available right now from our API documentation page.

Why Now?

With our v3 API preparing to leave open beta and become our new stable API, this felt like exactly the right moment to ship the spec. We wanted it in place before v3 goes stable so that developers building integrations from day one have a machine-readable description of the API to work with rather than hand-rolling clients purely from written documentation.

It has been one of the most frequently requested things we've heard from users over the years, and we're glad we were finally able to prioritise getting it done properly. Producing a spec for an API that's still in active development is a balancing act, but we felt the timing was right. The v3 API has now gone through several substantial beta releases and its structure has settled considerably.

What's Covered?

The specification covers both major surface areas of our API offering:

The v3 API

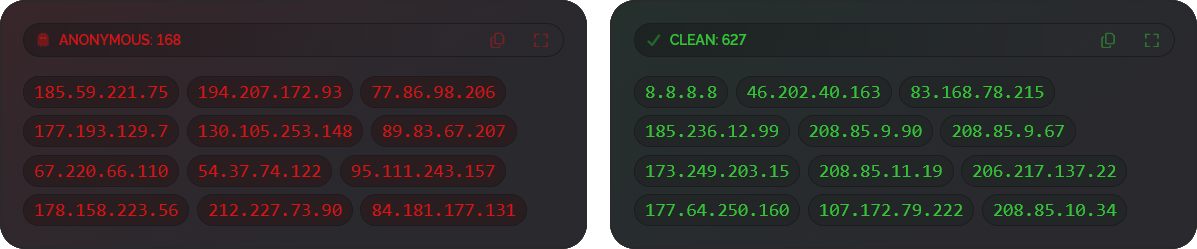

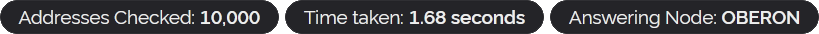

Our new-generation proxy, VPN and threat intelligence API. This includes IP and email address checking, the full response schema (network data, location, detections, risk scores, confidence scores, operator information, detection history, attack history and more), query flags, and all status and error response formats.

The Dashboard APIs

The full suite of programmatic dashboard controls, including positive detection exporting, tag exporting, account usage and query volume exporting, custom list manipulation (add, remove, set, clear, erase), custom rule management (print, enable, disable), and CORS origin list management.

All of this is described using the OpenAPI v3.1.0 format, which is the current specification version and brings full alignment with JSON Schema, meaning you can use the spec directly with any modern tooling that supports it.

What Can You Do With It?

Having a formal OpenAPI spec opens up a lot of practical possibilities for developers:

- Generate a client library in your language of choice using tools like openapi-generator or Speakeasy. If your language isn't yet represented in our code libraries, this is the fastest path to a typed, fully featured client.

- Explore the API interactively by importing the spec into Swagger UI, Scalar, or Stoplight — useful for understanding the response schemas before you write a single line of code.

- Import it into Postman or Insomnia and have a ready-made collection of every endpoint, with example request parameters and expected response shapes already filled in.

- Validate your integration by using the spec to confirm that your code is constructing requests correctly and handling all documented response fields.

- Stay up to date — as the v3 API evolves post-beta we'll be keeping the spec in sync with the documentation, so you'll always have an authoritative reference.

Where to Find It

The spec is documented and available for download from the OpenAPI Spec section of our API documentation found here.

A Note on the v3 API Beta

For those who haven't been following the v3 beta, our v3 API is a significant overhaul of how we present data. It moves to a fully nested JSON structure, returns booleans instead of yes/no strings, always includes a rich set of detection data without requiring individual query flags, introduces proper HTTP status codes for denied and failed requests, and adds new sections like detection_history and attack_history. It also brings a confidence score alongside the risk score, giving you finer-grained control over how aggressively you act on detections.

If you're currently on v2, there's no rush. We're committed to supporting the v2 API for at least until 2035. But if you're starting a new integration, v3 is where you want to be, and the OpenAPI spec is the best place to start.

As always, if you have any feedback — on the spec, the API, or anything else — please get in touch. We do read and reply to every message. Thanks for reading and have a wonderful week!